On Friday I mentioned Tim Wu’s op-ed last week, which asked if machines “have a constitutional right to free speech”? The question is posed in such a way that the obvious answer seems to be “no,” so it naturally drew responses which simply pose the question the other way: Timothy Lee at Ars Technica asks, “Do you lose free speech rights if you speak using a computer?”, and Julian Sanchez suggests that Wu’s argument would effectively remove First Amendment protection from any speech communicated via a machine. Paul Levy and Eugene Volokh similarly argue that while machines obviously don’t have speech rights, the people using the machines do, and Wu’s examples (e.g., Google’s search results) are the speech of the humans who designed the algorithm behind it.

On Friday I mentioned Tim Wu’s op-ed last week, which asked if machines “have a constitutional right to free speech”? The question is posed in such a way that the obvious answer seems to be “no,” so it naturally drew responses which simply pose the question the other way: Timothy Lee at Ars Technica asks, “Do you lose free speech rights if you speak using a computer?”, and Julian Sanchez suggests that Wu’s argument would effectively remove First Amendment protection from any speech communicated via a machine. Paul Levy and Eugene Volokh similarly argue that while machines obviously don’t have speech rights, the people using the machines do, and Wu’s examples (e.g., Google’s search results) are the speech of the humans who designed the algorithm behind it.

I think the distinctions here are trickier than any of these pieces, including Wu’s, let on. (Frank Pasquale appears to agree.) My own view, as suggested in my previous post, is that at least for copyright purposes, the more the machine contributes to the substance of the content, the less it is the speech of the humans behind it. But the distinction both First Amendment law and copyright impose is binary: something is either your speech or not your speech. Trying to figure out exactly where that transition occurs — even in principle — is difficult.

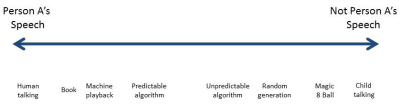

Let’s set up a spectrum of possibilities. So here’s the spectrum (click to enlarge):

At the extreme end, where we clearly have human expression worthy of First Amendment protection, is a human being talking to listeners present in the same room. From there you can move to speech recorded in some sort of format that can be understood by other people without the aid of a machine — a book, say, or a painting. That’s clearly speech too. And we’re still firmly in speech territory, as Volokh, Levy, and Lee all note, when we move from the book to speech recorded in some sort of format that is played back verbatim on a machine — films, emails, radio, etc.

Let’s visit the other end. Suppose Person A has a child, B. B says something: X. X is clearly B’s speech. But it is clearly not A’s speech, even though A had a lot of influence over how B thinks, and even if (if B is a minor) A might be held liable for B’s speech. The sentences that come out of the mouth of B are not speech with respect to A.

Let’s back up a step from this extreme. Take a Magic 8 Ball. A shakes up a Magic 8 Ball and reads it. Is that A’s speech? A generated a message by shaking the 8 Ball. But it’s not A’s speech; it’s the speech of the makers of the 8 Ball. We’re still not in the realm of A’s speech, even though A has now more directly caused a particular message to manifest itself.

Let’s suppose that instead of being simply the user of a device that generates predetermined outcomes, A is the creator of a device. But unlike the Magic 8 Ball, this device randomly generates messages. Perhaps it cuts them out of whole cloth, or perhaps it combs the web on a daily basis and randomly selects a sentence from the sites it visits, a bit like the “Reach for the nearest book, turn to page 53, go to the fifth full sentence” chain letters we all receive from time to time. Is the sentence the device posts A’s speech? It’s a little less certain than the previous hypothetical, but it still seems pretty clear that it isn’t. A hasn’t decided to post the sentence the device has found; indeed, A has no idea what the device is going to post, even though A created it. But here’s where things start to get interesting. The chain letter response is clearly the speech of A, even though A went through a number of mechanical steps to find it. (Here’s my result when I follow the procedure: “The engineer had failed to signal the presence of his train, which was proceeding at an unscheduled time and which subsequently collided with a scheduled passenger train.”) A, in reiterating that particular sentence, is consciously deciding to do so in a way that makes it A’s speech: choosing to participate in the game, and then, upon finding the sentence in question, deciding to post it for others to see. It’s a minimal communication, perhaps, but still a communication of meaning from A to others. But the random sentence selection device lacks this latter quality. A, in setting up the device, intends for it to do its thing; but there’s no conscious decision to send the particular result that it finds.

Does that mean the government could simply ban the operation of all random-message generation devices? Well, no, for the same reason the government can’t, at a whim, ban all operation of microwave ovens. There would at least be due process concerns. But if the government did decide to regulate random-message generation, it wouldn’t impinge on the free speech rights of A, the creator who does not consciously select the message being transmitted. (If that’s still troubling, I think it’s clearer if, instead of random message generation, we imagine the messages being posted by other people through, say, an access provider of some sort that just automatically routes the messages. Obviously those messages are the speech of the people who send them. But are they also the ISP’s speech, such that the ISP has standing to bring a First Amendment claim on behalf of itself? No one’s ever suggested that. Merely handling someone else’s speech, without selecting it, doesn’t make it yours.)

So much for the easy ones. Now for the hard cases: content generated by some sort of algorithm — say, a computer program. Obviously, the algorithm itself is the result of conscious choices made by its creators — what qualities should it look for? How much should they count? But the result of an automated implementation of that algorithm is not itself the conscious choice of the algorithm’s creators. Should that result count as their speech? We can perhaps think of two different sorts of algorithms here, those that lead to a predictable result and those that lead to an unpredictable result. Here’s an updated spectrum:

First, the predictable algorithms. Volokh and Lee give examples where a person designing a website selects a specific kind of content to put on the website — links to news stories about Senator Schmoe, or a “most-emailed” list — without knowing exactly what will get posted there. In other words, we have the conscious selection of a category but not specific instances of the category. Somewhat in the same realm is Julian Sanchez’s citation to video games: video games render their audiovisual displays on the fly, but the general nature of what is displayed is well known to the game designer in advance — even agonized over. (Unless that general nature is itself generated on the fly; that’s a future development I was anticipating in my previous post.) Is conscious selection of the topic — “various news stories about Senator Schmoe” — enough to make the content someone’s speech? That seems plausible, although the message conveyed is fairly minimal.

Volokh extrapolates from predictable outcomes to unpredictable ones as though it is a small step, but I think it’s actually a fairly large leap. Here’s Volokh: “Now say that, instead of just criticizing Schmoe or Acme, I set up my program to convey information about whatever person or company the reader wants — information that the program produces based on an algorithm I wrote, though coupled with some extra information that my colleagues at my company choose to provide. That too is just as constitutionally protected as the simple ‘stories about the Schmoe scandal’ Web site.” That’s far from clear. The person who inserts a widget on a webpage is making a statement based on their knowledge of the sort of content that will be in that space on the webpage: “Here are some stories about Sentator Schmoe.” But the person who sets up a tool to respond to user input is not clearly making any statement at all. What is the statement being made by Google to me before I decide to search for rhododendrons?

It’s natural to talk about the Google search engine as though it responds to questions, but of course that’s just anthropomorphizing Google’s computers; the human input to Google’s search engine occurred well before I ever entered my query. The message that Google’s algorithm sends in response is completely unpredictable, in part because my query is unpredictable. It might as well be random. It’s not just that Google’s engineers do not know precisely what option I might select, out of 4 or 5 or even 100 options to choose from. It’s that the universe of options is effectively limitless; as is the response that Google’s search engine sends. Who knows what Google’s crawlers have discovered about rhododendrons just before I send my query? Probably not the engineers at Google. It seems odd to say that whatever pops up on the Google results page for rhododendrons is the speech of engineers who possibly have never even heard of rhododendrons. It’s a bit like someone pointing at a book and saying, “I adopt the fifth full sentence on page 53 as my own,” without even having read the book first. Does that make the sentence in question their speech? I don’t see how, and that’s true even if a lot of care went into the various criteria used in the algorithm (why the fifth full sentence? why page 53?).

I’m picking on Google because Volokh and Wu focus their debate on search engines, but the issue goes well beyond search to any content generated by a computer that is opaque to the computer programmer. And that includes video games. Video game designers may know the layout of a virtual room in detail, and may know down to the fraction of a second when and where every monster may enter that room. But there will be aspects of the way the scene plays out that are beyond their control, or even prediction — where the player is looking from one second to the next, and what the player chooses to do. It would be wrong to include those unpredictable aspects as part of the game designer’s speech. The chasm between order and chaos is too far for speech to cross.

Cross-posted at Madisonian.net.